Joan Slonczewski is both a microbiologist (she is co-author of one of the standard textbooks in the field) and a science fiction writer. Her latest sf novel, MINDS IN TRANSIT, is a sequel to her previous novel BRAIN PLAGUE (2000), and overall the fifth novel in her Elysium Cycle (to which most of her novels belong). It would probably help to have read BRAIN PLAGUE before tackingly MINDS IN TRANSIT. We have a pair of planets with future technologies, the most important of which is that microbes are sentient, along with many artificial entities and systems. So the people in this world are continually negotiating both with one another and with the million microbes who inhabit them. There are evil microbes who take over their human inhabitants by manipulating their pleasure and pain systems, but most people get along with the microbes that inhabit them in a more or less symbiotic fashion. For instance, the main character Chrys is an artist, and her visual works are collaborations with the microbes within her. The novel mostly consists in all sorts of social and political interactions among the characters, including the microbial ones, and there is no clear line separating social interactions from political power moves. This may sound cynical, but the novel really is not so. The science fictional novum of intelligent microbes is really a way to dramatize how all life involves interactions among multiple life forms, all of which shape and are shaped by the physical environment as well as by one another. Interactions can exist anywhere along the spectrum from complete symbiotic mutualism to one-sided parasitic exploitation. And in fact, IRL our lives are profoundly shaped by such interactions, even if many of the partners (like the microbes that actually do live within our bodies) are not in actuality capable of language and conscious reflection. Slonczewski powerfully illustrates how the mutual web of life really works, through the extrapolative tactic of extended sentience. The plot, such as it is, is quite convoluted, but this makes total sense, given the ways that the book is depicting and making visible the sorts of connections and disconnections that all living beings are involved in. We are all — people, animals, plants, fungi, and microbes alike — involved with one another in multiple ways, involving both unit integrity and interconnections that mean that no unit is actually self-enclosed.

Charlie Jane Anders, LESSONS IN MAGIC AND DISASTER

Charlie Jane Anders’ new novel, LESSONS IN MAGIC AND DISASTER, is a fantasy novel with a light touch — sufficiently light that it is barely fantasy at all. The fantasy element — the practice of magic — is barely an extension of the actual world we live in. Jamie, he narrator/protagonist is a trans woman: a graduate student in English writing her dissertation on British women writers in the 18th century. She is involved in a difficult relationship with somebody who I presume is also a woman — though this is never explicitly stated; since this person uses they/them pronouns. The narrator’s other most important (and difficult) relationship is with her mother, who is mourning the death of her own partner (another woman). So this book is really about queer families, and about personal connections: those we cannot help being part of, and those we construct for ourselves. We all need, and most of us have, family relationships of one sort or another, though this is true without necessarily privileging the heterosexual nuclear family, and without denying the difficulties of such relationships (William Blake once wrote that “A man’s worst enemies are those/ Of his own house and family”).

In addition, the narrator is a practitioner of magic. Here, magic is understood as a way to manifest one’s own intentions. It involves finding a place that exists in between nature and culture, like human refuse left in the woods or some other natural spot, and leaving in that spot several objects: something that symbolizes or references what is being wished for, and something that is given up as a sacrifice. There can also be additional adornments. Jamie explains, somewhat dubiously: “it’s about knowing what you really want, in your fucking secret heart, and putting your wishes into the world in a way that can be heard.”

In the course of the novel, Jamie teaches her mother how to do magic, in an attempt to cure her mourning for her late partner. Over the course of the novel, magic sometimes works, but not always. It can have unanticipated consequences (as all forms of desire can have). And it sometimes blows back on the practitioner. Also, since one’s wishes generally involve relationships with other people, there are problems with what those other people — lovers, family members, friends, enemies, innocent bystanders — might themselves want and need.

Though personally, I do not believe that magic, as defined in the novel, actually works in the real world, it makes powerful sense in the novel as an intensified form of all the delights and dangers that come up when we negotiate our desires with other people (whom we may desire, love, or hate, and who in their own turn have their own equally complex desires). The workings of magic are also depicted as liminal (in between nature and culture, in between what we feel consciously and unconsciously, and so on), which makes it impossible to draw clean lines between realms as we all too often want to do. And the practice of magic also occurs within a world that, like the world we actually live in, involves both individual and collective dimensions, both the personal and the political, if only because bigotry is real, and homophobes interfere with the lives of people who just want to continue doing what they do.

The hardest thing for me to describe about this novel is its mood or tone, which is delicate and hopeful, but also all too aware about the obstacles other people and the very structure of the world place in our way, as well as the ways we harm ourselves and others, make mistakes, treat other people (even our loved ones) unfairly, and generally have difficulty separating joy from pain, generosity from selfishness, or satisfaction from regret. In a way, this is therefore a mundane novel, or a ‘realist’ one; except for how the existence of magic in the narrative, and in the lives of its characters, serves as a form of self-therapy, and a way to connect with others. This other-directed dimension is what distinguishes it from the more common first-person realist narrative, and what allows it, ever so lightly, to access, and use to its advantage, the subjunctive dimension (as Samuel R. Delany might call it) of non-naturalistic fiction.

Adrian Tchaikovsky, SHROUD

SHROUD is a look at alien sentience, by the (alarmingly prolific) science fiction writer Adrian Tchaikovsky. Two engineers are stuck in their spacecraft (which is actually more like a bathysphere) on the surface of an alien planet they call Shroud. Shroud is actually a moon of a gas giant in some solar system far from ours; but it is larger and denser (and hence with greater gravity) than Earth. It is enclosed in a thick hydrogen/methane/ammonia atmosphere, so thick that atmospheric pressure at the surface is twenty times that of Earth at sea level, and also so thick that no light can get through. Even the searchlights of the spacecraft can only cut through the murk for small distances. The astronauts are stranded; they have to travel halfway across the planet to reach the space elevator cable, dropped by the spaceship they originally came in, which is the only point from which they can be rescued (and indeed the only point from which any message they send can reach space beyond Shroud’s atmosphere at all). It turns out that Shroud is filled with rich and abundant life, which gets its energy from “planetary radiation, vulcanism, and a tiny greenhouse effect” (in this respect, Shroud is similar to some of the moons of Jupiter and Saturn). As the protagonists struggle to move across the surface of Shroud, they interact in multiple indirect ways with the native life, which is blind (since there is no light on Shroud) but which senses its surroundings by emitting radio waves (radar sensing, communication, and thought, all in the same medium). The human protagonists’ survival involves interacting with these native life forms in all sorts of ways. The novel is both an adventure story, and a sort of philosophical meditation on the possibilities of sentience.

Donald Trump is not conscious

Donald J. Trump is not conscious. This is my ultimate thesis. That is to say, Trump is what the philosopher David Chalmers calls a “philosophical zombie”, Such beings “are exactly like us in all physical respects but without conscious experiences: by definition there is ‘nothing it is like’ to be a zombie. Yet zombies behave just like us, and some even spend a lot of time discussing consciousness.”

Chalmers is of course responding to a long line of philosophical questioning. Descartes claimed “I think, therefore I am”, bu he had difficulty extrapolating from his own certainty about himself to other people; He worried that this formulation was consistent with the idea that other people might simply be automata with no inner consciousness; and his effort to get around this problem was sort of lame and unconvincing (he relied upon God to make sure that other people were similar to himself). It makes more sense to accept other peoples’ claims to be conscious in the same way that I am by the principle of philosophical charity: I should not deny your claim to be conscious without a good reason; rather, my presumption should always be that you are conscious, as you claim, just as I claim to be; even though I do not have the direct experience of consciousness in your case, only in my own.

So when I claim that Trump is not conscious, I am relying upon extraordinary evidence rather than making the claim just on general principle. One way to understand consciousness is to see its existence in the form of having feelings or experiences; or more specifically (according to philosophical argument) in the form of the experience of qualia. One feels happy or sad; one has pleasure or pain; one has a sense of the color red that is different from a sense of the color blue.

Babies give evidence of having experiences and feelings; so do familiar animals like dogs and cats. Recent scientific experiments have extended the scope of this, suggesting that other animals like lobsters and insects have conscious or qualitative experiences as well. Several studies have suggested that bees have moods, more or less shared among all the individuals in a hive.

The question of what it would mean to determine empirically, from an entity’s outward behavior, whether it has inner experiences is obviously a difficult one. Still, Trump appears unique among human beings for the way that he never displays signs of pleasure or pain. It is evident to me that even mosquitoes have a certain sense of aesthetic satisfaction; but Trump doesn’t seem to. He entirely subsumes aesthetic categories into real estate sales (think of his use of the word “beautiful”). Trump clearly craves power and self-aggrandizement; but in his case, these seem to be autonomic imperatives, rather than being produced by inner drives (this is why Trump is so different from Nixon. Nixon’s complicated and twisted inner drives would have fascinated Freud, but Freud could not have had anything to say about Trump).

I will stop here, but I could go on indefinitely. The point is that nearly all living things, even bacteria, seem to have what philsophers sometimes call what-is-it-likeness (from Thomas Nagel’s famous article “what is it like to be a bat?”). But Trump just IS; it is not like anything to be him.

WAVE WITHOUT A SHORE, by C J Cherryh

I just finished reading C J Cherryh’s novella WAVE WITHOUT A SHORE (one of the three texts in the collection ALTERNATE REALITIES, available on the Kindle for $9.99). WAVE WITHOUT A SHORE is a brilliant work of science fiction. It takes place on a planet where powerful men (more often men than women) believe that they create their own reality and impose it on anybody “weaker” than themselves. They simply deny the existence of what is not within their will, learning to not even notice the existence of others who are excluded from their social arrangements (such others include both human beings who have been shamed and demoted or expelled from society, and to non-human intelligent beings). (This reminds me a bit of the way in which, in China Mieville’s THE CITY AND THE CITY, the people of the two cities have learned to ignore their mutual co-existence, each person unseeing the people of the other city).

The protagonist of WAVE WITHOUT A SHORE, Herrin, is a sculptor who is fatuously confident of his own superiority and genius; the only person he recognizes as perhaps an equal is his frenemy, Waden Jenks, the dictator ruling human society on the planet. Herrin makes a huge statue of and monument to Jenks; this project is a clash of the two men’s will-to-power, since Herrin is both glorifying Jenks and solidifying his tyrannical rule; and yet at the same time Herrin is asserting his own superiority over Jenks, since the implication of the piece is that Jenks needs Herrin’s artistic genius in order to claim supreme status. It is a prototypical example of how society is grounded in the Hegelian master-slave dialectic, or perhaps of Nietzsche’s vulgarization of this dialectic in his vision of hierarchies generated by conflicts of clashing wills-to-power.

Once the statue and monument are finished, Jenks understands that he has been both elevated and consigned to his place. In order to reinforce his dominance, he has his goons beat up Herrin and especially break all the bones in Herrin’s hands, so that the artist will never be able to sculpt again. There is a lot in the novella about how Herrin’s mastery is concentrated, not just in his ability to see and imagine, but above all in his manual dexterity in shaping clay and stone to his will. (This reminds me of the scene in Tarkovsky’s movie Andrei Roublev, where an aristocrat has artisans blinded so that they will never be able to construct a house more beautiful for the one that they made for him).

I won’t discuss the later twists of the narrative, except to say that Cherryh plays out the consequences of the collapse of Herrin’s worldview, and his being forced to understand that others exist — both human beings and aliens. Reality is capacious and contradictory; it contains many forces, and nobody can think to dominate and control them all. The way I have expressed it, this seems like an obvious point to make; but in asserting it, Cherryh undermines and deconstructs the pernicious myths that are central to our cultural imaginary, and that have been asserted not only by the most obvious creators and mythmakers (like Ayn Rand and Leni Riefenstahl), but much more widely in the literary and cinematic fictions that we consume and swear by.

The Book of Elsewhere (Keanu Reeves and China Miéville)

The Book of Elsewhere is the first new work of fiction by China Miéville since 2016. (In the interim, he published nonfiction books on the Communist Manifesto and on the Russian Revolution). The book is a coilaboration between Miéville and the actor Keanu Reeves. The main character, known as Unute or B, and the basic contours of his world, were originally developed by Reeves for a comic book, or graphic novel, called BRZRKR; its various installments have been co-written by Reeves and a number of comic book authors. The novel massively expands the franchise; and a feature film and an anime series are in development.

Unute is a warrior, born 80,000 years ago, and apparently immortal. He has superhuman strength, and the power to go into a berserker fugue state where he pretty much kills everyone around him. His body recovers quickly from injuries that would be mortal to anyone else; and when he is injured badly enough to actually die, he soon regenerates, breaking out of an egg in full adult form. He is also blessed, or cursed, with the complete memory of all his experiences over thousands of years; though he is not conscious during, and therefore does not later remember, the short periods during which he regenerates in the egg.

All this is recounted, in outline, in the original graphic novel. (There are three volumes of BRZRKR written by Reeves in collaboration with Matt Kindt, which together form one continuous narrative; two additional stories, written by Reeves with Steve Skroce and Mattson Tomlin respectively, provide additional incidents in Unute’s career. In all these cases, I am only listing the writers; a number of visual artists collaborate as well).

The Book of Elsewhere, with its considerable length, allows for a great expansion of things that were only sketched briefly in the graphic novels. We mostly see Unute in the present moment. He is working, uneasily, as part of a special unit of the American (apparently) secret intelligence forces. They send him (together with a crack team of soldiers) to various hot spots around the world, in order to commit assassinations or wipe out groups of (supposed) “terrorists.” Unute doesn’t seem to have any particular committment to American hegemony, and the military and intelligence authorities cannot really order him to do anything that he doesn’t want to do. But he goes along with their requests in return for having them study him so he can learn more about himself. In particular, Unute is tired of being immortal; he doesn’t want to die, but he wants to be able to die.

The writing is vivid and intense, as we would expect from Miéville. There is a lot of action, both in the present and in a number of flashbacks to Unute’s past, and to stories of individuals whom he encountered briefly over the course of the ages he has been around. There is no scientific agreement about just when Homo sapiens developed a full language, and all of the capabilities we have today; but 80,000 years ago is a reasonable figure. Anatomically modern Homo sapiens has existed for something like 150,000 years, but evidence of cultural achievement is more recent. On the other hand, our ancestors interbred with closely related species (the Neanderthals and the Denisovians) between 50,000 and 20,000 years ago. So we can assume that Unute’s lifespan pretty much coincides with the history of human “species being” (to use Marx’s term).

There are a lot of (pleasurable) digressions and side developments, but the novel is fundamentally concerned with the (philosophical) meaning and nature of Unute, or of the very fact that he exists. He is continually looking for any others who are like him, or who are similarly immortal because they exist in some sort of binary/dialectical opposition to him, but this quest is frequently disappointed. In particular, his murderous abilities do not exist in the abstract, apart from any historical contexts and situations; though they are continually being enacted within such contexts and situations, of which working for American power is only the most recent. Whatever Unute may be, he is emphatically not an ahistorical principle of evil or tyranny or fascism.

Unute does, however, turn out to have doubles and/or enemies in certain metaphysical contexts. His nemesis for much of the novel is a large pig, specifically a Babirusa, which seems to have the same powers as he does: it cannot die, or at least it regenerates whenever it is killed. This Babirusa has hunted, and sought to kill, Unute for most of his 80,000 years of existence. In addition, if Unute is a force of Death, as he often considers himself to be, then he is unavoidably in opposition to a force of Life, which itself may be eternally present, or at least eternally reincarnated, in the same way that he is. Unute does have an enemy of this sort. But the enmity of this opponent, and the enmity of the pig as well, change over the course of the novel; and seem in the last analysis only to constitute false oppositions. In a more fully dialectical sense, both Unute and his uncanny doubles seem to be agents of Change, and in this respect they are more similar than they are different, and they are alike opposed to the entropic decline of a universe fated to end in a heat death (as the Victorians mostly believed, and as some physicists today still maintain). I fear I am saying too much, and perhaps giving away spoilers, even to go this far. The theme is worked out in much more careful detail over the course of the novel, and especially in its final sections. I will just say, first, that in the course of his career, although Keanu Reeves has occasionally played bad guys, he doesn’t usually do this; he seems to prefer that, if he is not in a heroic role, then he is at least in an ambiguous one that they audience can identify with in spite of various unpleasant aspects (e.g. John Wick). And in the second place, I will note that China Miéville has played with similar ideas in earlier novels, going all the way back to Isaac’s crisis engine in Perdido Street Station, which is able to mobilize the potentiality for change in any given situation.

I will stop here. In any case, The Book of Elsewhere is a rich book, worthy of both its creators.

Adrian Tchaikovsky, SERVICE MODEL

Adrian Tchaikovsky is one of the most accomplished science fiction writers of the past two decades. He is also remarkably prolific, having published well over thirty hefty novels, together with many shorter works, in the years since 2008. Tchaikovsky also has a great range. He seems reluctant to repeat himself, and has instead explored a wide variety of subgenres in science fiction and fantasy: everything from novels of uplifted animal intelligence (the Children trilogy), to the dying-Earth subgenre (Cage of Souls), to metaphysical space opera (the Final Architecture trilogy), to alternative visions of evolution (The Doors of Eden), to science fantasy with a dollop of horror (Walking to Aldebaran).

Tchaikovsky’s latest novel, Service Model, might be characterized as robot cyberfiction. It recounts the story of a robot’s picaresque adventures in a ruined, posthuman world. “Charles”, as the robot is initially called, initially serves as a valet to a rich man, and is programmed to anticipate his every wish, and to pamper him to a degree far exceeding what even the richest actual human beings today are able to get their servants to do. Charles is content in his position, even though his idle, wealthy employer is clearly a degenerate scumbag (I am using this phrase, which does not appear in the actual text of the novel, in the precise sense in which it is defined by the Urban DIctionary: “a person whose behaviour and attitude holds back the progress of the human race while eroding social solidarity”).

Only one day, without realizing it, Charles slashes his master’s throat while in process of shaving him. With no master left to serve, Clarles has to leave. In addition, since the name “Charles” was only imposed as a feature of his initial position, once that position is gone, so is the name. For the rest of the novel, and following a suggestion from somebody else, the robot calls himself Uncharles instead. (I am only using he/him pronouns here because of the initial name “Charles”; the robot shows no particularly gendered characteristics one way or the other).

Most of the book narrates Uncharles’ search for another source of employment; and secondarily in order to find out why he murdered his employer, since he cannot discover any reasons to have done so. He sees himself as a mechanism, having tasks to perform, but without anything of the order of needs, desires, and emotions, such as human beings might feel. Uncharles seeks a new job, not for monetary reasons — he has no physical needs as long as he can be recharged from sunlight — but because he still feels a strong impulse to do the sort of work for which he was initially programmed: to be the enthusiastic helper of a living human being. The problem is that the world has been largely destroyed. Pretty much everything has been reduced to debris. The wasteland is heavily populated with robots set adrift, much as Uncharles himself is. Human beings have almost gone extinct; for the most part, the only surviving ones are relegated to hellish situations of continual pain and punishment.

For most of the volume, Uncharles passes through a series of situations that are unattractive for him, and evidently satirical from the point of view of the author and of us as readers. Thinking the murder of his employer results from some sort of mechanical defect, Uncharles goes to a robot repair center that is entirely dysfunctional (which is evidently for the best since its only form of “repair” for broken robots is to terminate them and scavenge their physical remains for spare parts). Uncharles then goes to a sort of farm or factory where the few surviving human beings are compelled endlessly to re-enact their supposed pre-robotic folkways (consisting in straightened living situations, hellish commutes, and meaningless and unending factory labor, though they do not actually produce anything). Then there is a library where all human knowledge is transcribed into 1s and 0s and then erased, with the original sources (books, movies, etc.) also being physically destroyed. After that, there’s an enormous junkyard where robot armies continually battle one another for no discernible reason. And so on. These scenarios are referenced to famous modernist authors, such as Kafka (the bureaucracy of the repair facilities), Orwell (the ceaseless surveillance of the people forced to reenact the most oppressive circumstances of their past lives), and Borges (the library) — though this is a joke only for the readers, as it is something the robots themselves remain unaware of.

Uncharles is accompanied on his voyages by another figure known as The Wonk (who turns out to be a human woman in robot disguise — I don’t feel like I am giving away a spoiler here, because the reader realizes that this in the case, long before Uncharles is officially informed of it). She plays Sancho Panza to Uncharles’ Don Quixote, with her comments continually undermining his delusions about his tasks and about the structure of society. She also keeps noting to Uncharles that, in contrast to his original programming, he has developed something like free will. This is an observation that he continually denies, but that readers in the long run judge to be true.

The question of human freedom or flexibility versus robot programming and external determination is also continually raised in the novel’s own language. A close third-person narration is continually describing Uncharles’ reactions to various things by comparing them to human emotional responses, while at the same time disavowing these comparisons by saying things like: Uncharles was acting very much like a person getting angry, though of course as a robot he didn’t feel anger or any other emotions. The novel gets a considerable degree of this power from this sly use of rhetoric, as well as from the evidently satirical and exaggerated characterizations of all the predicaments within which Uncharles finds himself.

In short, Service Model is a brilliant novel, equal in power to many of Tchaikovsky’s other works, but unique among those works in its particular strategies and angles of approach. Its ultimate impact is to blur the distinction between internally-generated and externally-imposed actions and responses, as between what philosophers call dispositions and what common sense refers to as feelings. And therefore it also erodes (even as it overtly affirms) differences between natural and artificial intelligence. This is both the source of the considerable pleasure I took in reading the novel, and the sign of its being a deep philosophical thought-experiment and argument in its own right.

Michael Bérubé, THE EX-HUMAN

Michael Bérubé is one of our best social and cultural critics. He has written important books on cultural studies, disability studies, and the politics of the “culture wars” in academia and beyond. Bérubé’s new book, The Ex-Human, is about science fiction. Bérubé offers thoughtful close readings of a number of classic science fiction texts: Ursula Le Guin’s The Left Hand of Darkness, Cixin Liu’s The Three-Body Problem, Margaret Atwood’s Oryx and Crake, Philip K. Dick’s Do Androids Dream of Electric Sleep? (with some reference to its film adaptation as Blade Runner), Arthur C. Clarke’s 2001 (with some reference to its better-known film adaptation by Stanley Kubrick), and Octavia Butler’s Parable series and Lilith’s Brood series.

Bérubé’s discussions of all these texts are subtle and insightful. But close reading in its own right is not the point of the book. Bérubé includes autobiographical personal reflections, and discusses writing the book in the wake of the COVID-19 pandemic, which deeply changed the dynamics of his own personal and family life, together with everyone else’s. Above all, though, the book is concerned with how science fiction allows us to entertain non-human perspectives upon human life and existence, and specifically to imagine the end of humanity — or rather (and better) its transformation in radical ways that exceed our capacity for imaginative projection and continued empathy.

I am inclined to share Bérubé’s pessimism about human futures. Immanuel Kant said that, regardless of its mistakes, excesses, and bad human rights record, the French Revolution was inspiring and positive because it demonstrated the sheer fact that human beings were capable of rebelling against injustice and envisioning something better. But today we find ourselves facing a sort of inverse of this situation. Regardless of whether Donald Trump gets elected to a second term or not, his widespread support by millions of American voters is frightening and horrific because it demonstrates the sheer fact that human beings are so enthusiastically vicious, bigoted, and sadistic that they are more than willing to embrace a worsening of their own lives, as long as this guarantees that other people will be treated even worse than they are, and that they will retain the privilege of enjoying the spectacle of other people’s suffering, like Christians in Heaven who gain surplus gratification from observing the torments of sinners in Hell. Though Bérubé doesn’t express himself precisely in these terms, he is definitely saying something similar. He cites, for instance, Octavia Butler’s insight that human beings are both highly intelligent and inveterately hierarchical, an extremely deadly combination.

Bérubé chooses the works he discusses precisely because they all share something of this ferociously negative vision, even though many readers/viewers seem to have gone out of their way to avoid acknowledging this. He defines his perspective by identifying it with that of Ye Weinjie, the character in The Three-Body Problem who deliberately contacts the Trisolarians (the aliens who inhabit the Alpha Centauri three-star system), thus encouraging them to invade Earth. Having suffered through the Chinese Cultural Revolution, and subsequent tyrannical and environmentally destructive actions of the Chinese government, Ye Weinjie concludes — mistakenly but not unjustifiably — that an alien invasion might well be the one thing that “can somehow save us from ourselves.” Bérubé is careful to distinguish this position from the more overtly radical one (also dramatized in the novel, and expressed in theoretical terms by such anti-natalists as David Benatar) that straightforwardly seeks the self-annihilation of Homo sapiens altogether.

For Bérubé, then, the greatest virtue of science fiction is its ability to articulate perspectives “in which we have become able to see ourselves and our sorry fate from the vantage point of something other than the human.” Rather than using such common terms as posthuman or transhuman, Bérubé describes his perspective as ex-human. To me, this suggests the scenario of tending one’s resignation from, and retiring from, the human race, although Bérubé does not quite describe it in these terms. In any case, the term ex-human avoids the self-congratulatory visions of exceeding and transcending the human that we find in Nietzsche, say, or more to the point in recent Silicon Valley TESCREALists (TESCREAL = “transhumanism, extropianism, singularitarianism, cosmism, rationalism, effective altruism, and longtermism”). [You can find out more about TESCREALism here]. For Bérubé, the ex-human “is distinctive in that it is framed by the possibility of human extinction and driven by a desire to imagine that, somehow, another species is possible.” Becoming otherwise is desirable, and may well be a better alternative than remaining conventionally “human”; but it should not be seen as a conquest, a triumph, or a heroic accomplishment.

The novels and films discussed by Bérubé all express this desire in various ways, albeit often tentatively. The bioengineering of a less malevolent successor species to Homo sapiens is most explicitly envisioned in Oryx and Crake. Much more mildly, Ursula Le Guin’s vision of a society that is not governed by gender in The Left Hand of Darkness proposes a modification of “human nature” that is not flawless, but evidently more desirable than not. Though Do Androids Dream of Electric Sleep? is explicitly premised upon the supposition that human beings are able to feel empathy, whereas androids are not, it is not only set in the aftermath of a human-made catastrophe (nuclear war), and questions its human-supremacist premise in many other ways as well. 2001 also contrasts banalized human characters with the supercomputer HAL, who is arguably the most empathetic character in both the novel and the movie. 2001, like Androids and Blade Runner, suggest that transcending, or at least reforming, what we understand as “the human” is urgently necessary, and indeed perhaps inevitable.

The richest discussion in The Ex-Human is that in the final chapter, devoted to Octavia Butler. Here Bérubé discusses both the Parable diptych, and the Lilith’s Brood trilogy. The Parable books presciently envision a Trumpian America, and set against it a small-scale utopian community project. In the course of the novels, the protagonist Lauren Oya Olamina does succeed in setting up a worthwhile intentional community, but only after the most horrific and painful tribulations. And in any case, such a result is not scalable to humanity as a whole. This leads Butler to posit space travel and migration to other worlds as a more permanent solution to self-inflicted human suffering; but it is all too symptomatic that Butler was never able to write a third novel in the series that would convincingly envision such a movement. Here Bérubé cites Gerry Canavan’s exploration of Butler’s many abandoned manuscripts. (In fact, this impasse was already encountered in Butler’s early novel Survivor, which she ultimately disowned as a result).

Bérubé then turns to Butler’s earlier Lilith’s Brood series, consisting of Dawn, Adulthood Rites, and Imago. In these novels, Butler envisions an Earth devastated by nuclear war, survived only by a small number of humans who are rescued by an alien species with superior technologies, the Oankali. These aliens define themselves as gene traders; they seek to produce a genetic hybrid between themselves and humans. I won’t go into Bérubé’s reading of this trilogy in detail. I will only note that the biggest interpretive disagreement about the trilogy is over whether we should regard the Oankali as benevolent saviors, or whether we should regard them as manipulative and oppressive colonialists. There is good evidence for both approaches in the books. The Oankali insist, quite plausibly, that human beings are bound to destroy themselves (again; since the premise of the novels is that we have already done so once), unless they accept the deep species modifications that are being offered them. However, the Oankali clearly maintain a position of superiority vis-a-vis the surviving humans, lying to them, forcing them into actions against their will (up to and including what can only be understood as rape), and continually overriding human demands and desires, supposedly for the humans’ own good. Bérubé offers a remarkable synthesis of these two opposed readings; he agrees that the Oankali’s treatment of human beings is entirely, unacceptably nasty; but at the same time he suggests that (from Butler’s own perspective, which Bérubé endorses) such a forcible remaking of humanity into something *other* is preferable to human beings remaining as they are. The human condition as it actually is can only lead to horrors of nuclear holocaust, devastating epidemics, or the re-election of Donald Trump. I have to say — much as I do not want to — that Bérubé has a point.

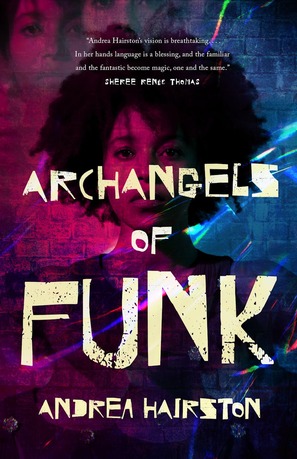

Andrea Hairston, ARCHANGELS OF FUNK

Andrea Hairston, ARCHANGELS OF FUNK

I received an advance copy of Andrea Hairston’s Archangels of Funk, in return for giving an honest review. Here it is. The book will be published in two weeks or so.

Andrea Hairston’s new novel, Archangels of Funk (2024), is a science-fictional sequel to her two previous magical realism / alternative history novels, Redwood and Wildfire (2011) and Will Do Magic for Small Change (2016). The new novel’s heroine, Cinnamon Jones, is now a sixty-year-old woman; those previous novels were about, respectively, Cinnamon’s grandparents (a Black grandmother and a Seminole/Irish grandfather) who leave the deep South and come north to Chicago during the Great Migration of Black people in the first half of the twentieth century, and Cinnamon herself as a teenager in Pittsburgh in the 1980s.

This places Archangels of Funk as occurring in 2030 or so. This is only a few years beyond the actual present in which the book was published, and in which I am writing this note. But things have changed radically during those several years. We have gone through the Water Wars, which are not described in detail in the novel, but which evidently shook things up quite a bit. Large corporations and rich white people still own the world; but not everything is under their control. Cinnamon Jones is part of a thriving multiracial alternative community, including farming cooperatives and centered on the Ghost Mall, a former shopping center now refurbished as a collective gathering place and free kitchen, with lots of space for experimental collaborative projects. This community is hooked in to the global Internet, but it is largely a loose, local aggregation, autonomous from major centers of power and production, more or less self-sufficient in terms of food, and largely reliant on bicycles for transportation, instead of cars. This community is also relaxed and dispersed, resistant to the sort of centralization and totalization that one often finds in both utopian and dystopian visions.

Archangels of Funk is narrated in close third person through the varying perspectives of Cinnamon herself, her close friend Indigo, her dogs Bruja and Spook (both of whom seem to be able to grasp spoken human desires and suggestions, and the latter of whom is cyber-enhanced), and even her three “circus-bots”. These sentient machines are camouflaged, when they are quiescent, as piles of junk; but when they awaken they take on robotic animal forms, and project vivid multimedia spectacles. They are also imprinted with the personalities of Cinnamon’s grandparents and great-aunt. The circus-bots preserve and transmit the wisdom of the ancestors, but they also plunge forward in time in order to generate exuberant new configurations of spectacle.

Hairston is less concerned with narrative than with exploring the textures of everyday life in the changed circumstances of the world that the book presents. The novel is set in just one locality, western Massachusetts, where Hairston herself actually lives; and it takes place over the span of just a few days. Cinnamon is mostly concerned with staging her yearly extravaganza, the Next World Festival. This is a “community carnival-jam”: a gigantic theatrical spectacle, highly participatory, played in an outdoor ampitheater, and filled with song and dance, as well as with seeemigly magical masks and costumes, together with splendiferous props and sets. Everything in the Festival both calls back to African American history and leap forward to envision social and personal transformations. A Mothership lands, recalling George Clinton and Parliament/Funkadelic. Careful planning serves for the proliferation of play, rather than for any more instrumental purpose.

Cinnamon is by no means anti-technology. But she is careful with her gadgets and inventions, because she is all too aware of how computational devices in the 21st century serve purposes of dissuasion and surveillance. Her house is configured as a Faraday cage, and she never carries or uses a cellphone. Her bots are always gather data, and anxious to give advice; but they are also booby-trapped to prevent corporate spies from seizing and reverse-engineering them. There is also considerable attention paid to physical safety. The surrounding woods are rife with robbers looking for a quick score, as well as with “nostalgia mlitias,” dudes roaming about in combat gear trying to bring back the old days of MAGA values. But their efforts are somewhat hampered, fortunately; though they seem to have lots of guns, they are mostly empty because bullets, bombs, and other munitions seem to be quite scarce.

Cinnamon and her friends are not just happy-go-lucky creators, however. They suffer from depression, relationship problems, bouts of fake nostalgia, and other all too real psychological symptoms. The ill effects of global warming are everpresent: “deny climate change all you want, but when that brushfire rolls up on your ass, you run or burn“. There are also kids whose parents are missing, visitors with murky and perhaps dangerous agendas, and so on. The novel is, at least in part, about how to negotiate such difficulties. It is psychologically incisive, even as it values lateral connections with others over the narcissism of deep interiority. Cinnamon is adamant that she is “too busy” to be “waiting for love,” but she remains open to chance encounters and unexpected opportunities. People always seem to be engaging in

Archangels of Funk has no deep, mythical narrative, no grand, overarching Story: this is precisely because everything in the book is composed of little stories, told and experienced, involving exchanges and transformations on multiple levels. There is no firm line between actuality and dream, or between technology and magic. Hairston’s prose style strikes me as unique, and it is ultimately what draws everything together. The writing is liquid and mercurial, stopping to capture unexpected details, passing between interior monologue and physical description, then turning and flowing away from what you thought was important, and drawing your attention somewhere else. When I finished reading Archangels of Funk the other night, I felt a bit confused because I was hoping for more. The final dialogue, between Cinammon, her bots, and some long-ago friends who have shown up long after she expected never to see them again, suggests a never-ending adventure. You may become tired of adventure and seek to rest for a while, but the energy will return, at least until you have passed (as all people and all things ultimately must). “You’re a dream the ancestors had” — which is true enough, except vice versa is true as well. “We’re in a sacred loop”; there is no goal except continuing to play and to circulate. “Nobody makes up their own mind,” because our minds like are bodies are continually in motion, continually intertwined with others. (Earlier, we had been told that “mind was always a community affair”). And: “here we are at the end of the world,” Cinnamon finally says, “thinking up what the next world will be.” And: “I am who we are together.”

Cory Doctorow, THE LOST CAUSE

Cory Doctorow’s latest science fiction novel, THE LOST CAUSE, is a book about both the politics and the technics of responding to climate change. The novel is set in the near future, perhaps thirty years from now, in Burbank, California. Brooks, the narrator and protagonist, is a 19-year-old young white man, a high school graduate, who inherits a private house when his grandfather passes away. The grandfather, who raised Brooks after his parents died when he was 8, was a MAGA climate change denier; the two always argued. Now Brooks is entirely on his own.

The political background to the novel is important. In the time between our actual moment and the present of the novel, global warming has only become much worse than it is now. A progressive US President has passed comprehensive Green New Deal legislation, guaranteeing jobs and a reasonable income for all. The jobs guarantee mostly takes the form of temporary employment involving all sorts of environmental remediation. Brooks and his friends do not worry about long-term careers; they take these short-term jobs one after another, working hard but knowing not only that they are they economically secure, but also that their work offers our only hope for averting worldwide catastrophe.

Things are already really bad, with areas of the United States and other countries rendered uninhabitable due to heat, drought, and chemical pollution, and loads of people forced out of their homes and neighborhoods due to unviable conditions. The only hope these refugees have is to rely upon the goodwill of strangers: people in other regions who agree to take them in. In places like Southern California that are still functional for the time being, a lot of construction work is necessary, both to house these refugees, and to remediate and replace environmentally unviable practices and structures.

Brooks invites refugees into his home (which is the kind of 20th-century private residence that is way too big to house just a single person or small family), and eventually tears his house down in order to build a multiple-residence structure instead (a four-story building with two apartments big enough for a family on each floor). This can be done quickly and cheaply, thanks to advances in building technology: modular, prefabricated parts that are resilient and inexpensive, and easy enough to assemble together that the entire four-story building can be constructed by a crew of 15 people or so in less than a week. Doctorow, as is his wont, goes through all the technical details at almost excruciating length. Such is not my favorite sort of writing; but I think that it is justified here, because Doctorow needs to make the point (and succeeds in making it) that this is not fantasy, but something that will soon (if not quite yet) be realistic on a technological level. He convinces the reader that, in our society today, we have the expertise and the resources (without straining the environment yet further) to do things like this.

Even at best, Doctorow tells us, this is not a quick solution to climate change, nor even a quick fix for immediate emergency problems. It is rather an ongoing process, that Brooks and his friends can expect to continue for their entire lives. We are not given anything like utopia, but Doctorow’s vision is nonetheless hopeful rather than grim. The extensive Green New Deal provisions in the near-future of the novel are what make this sort of vision viable (in a way that “market-based solutions” are not). To avert climate catastrophe will require a lot of hard work, but in a way that involves feelings of satisfaction and solidarity. The alternative (deepening disruption of the climate) is too horrible to consider.

The point of Doctorow’s novel is that there are no technological obstacles to such relative improvement (alleviation and remediation, if not complete reversal of global warming and widespread pollution). Rather, the obstacles are political. Brooks and his cohort in general seem both eager and idealistic. They know that the danger of climate change is undeniable, but that responses are available that are not futile. The problem is that there are way too many people who are trying to stop them.

The plutes (plutocrats) and their allies seek to block the necessary changes, because they don’t want to give up their privileges, their wealth, and their power. They think that they can wait out the climate disasters, with their power and money intact, regardless of what happens to everyone else. We meet them in the novel in the form of the Flotilla, a seasteading enterprise of the sort advocated by extreme libertarians; people live on ships they own, sized from small vessels to aircraft carriers, that remain more than 12 miles from shore in order to escape the juristiction of the US or any other government. This has its attractive side, if you are one of the owners — but not if you are one of the workers keeping those ships running, or one of the multitude who cannot find a place upon them. China Mieville has written at length about these false paradises; Doctorow only gives them a subordinate focus, but shows well enough how they can only be the solution for a small privileged class.

The more immediate danger faced by Brooks and his friends is that of the MAGAs — mostly older white men, middle class and well-to-do. who are embittered about the changes that they see around them, and which threaten their sense of superiority. Brooks’ grandfather dies early in the book; but all his cronies are still around, and they have automobiles (one of the luxuries they refuse to give up), not to mention weapons (AK-15s and the like) to enforce their anger. They obstruct change in any way they can. from swarming political meetings in order to outshout their opponents, to seizing public spaces in order to enforce what they consider to be their property rights, to bombing government buildings, to making “citizens’ arrests” of Brooks and his friends in order to stop them from building a place to house refugees. They are aided behind the scenes by the plutes, who employ lawyers invoking spurious grounds to crack down on new housing construction and other climate alleviation procedures.

The novel has something of a repetitive structure. Each time Brooks and his friends are in the process of doing something useful, the MAGAs show up to stop them. This happens again and again, in nearly every chapter. It is frustrating and perhaps a bit repetitious, but Doctorow is right to compose the novel in this way, because it reinforces his double points: first, that even at the best, climate remediation and rights for climate refugees will involve extensive difficulties; and second, that political divisions in the US are so severe and extreme at this point, that any good faith attempt to actually alleviate climate conditions can easily lead us to the brink of civil war.

Doctorow offers no good solutions for countering the MAGAs; they are almost certainly a minority, but they have money and guns, and backers among the rich and within the media. No matter what we do, they will not go away. Doctorow even bends over backwards to get us to understand that these people are not just somehow intrinsically evil. They have their own desires and demands, which make sense to them; they have their own vision of the good life which they used to have, and which they are not wrong to see as slipping away. (If your sense of a fulfilling life includes an enormous mansion and a gas-guzzling vehicle that allows you to go anywhere and everywhere without obstruction, then you may well find the new environmental constraints to be a limit upon your freedom; and you will probably blame young people and foreigners and people of color for your torment). Brooks, to his credit, tries to understand where they are coming from; and Doctorow pushes the point that, if we simply demonize these people, we run the risk of becoming as vicious and intolerant as they are.

I could probably go on at considerably more length detailing the ins and outs of all these situations, and tracing how thoughtful the novel is in facing them frankly, rather than pushing them under the carpet or simply arguing them away. But I think I have done enough to explain what is at stake. The novel is at once remarkably optimistic, since it shows us ways that we might really be able to alleviate the oncoming disaster of global warming and its accompanying dangers. But at the same time, it also leaves me (or leaves a side of me) in despair, because it refuses to diminish the dangers and near-impossibilities that we face. I will not spoil the novel’s conclusion here, but only say that it powerfully balances the triumph of Brooks and his friends with an ecological disaster that they could never have imagined.